SPARGO: Exploring and Exploiting the Geometric Landscape of Infinite-Dimensional Sparse Optimization

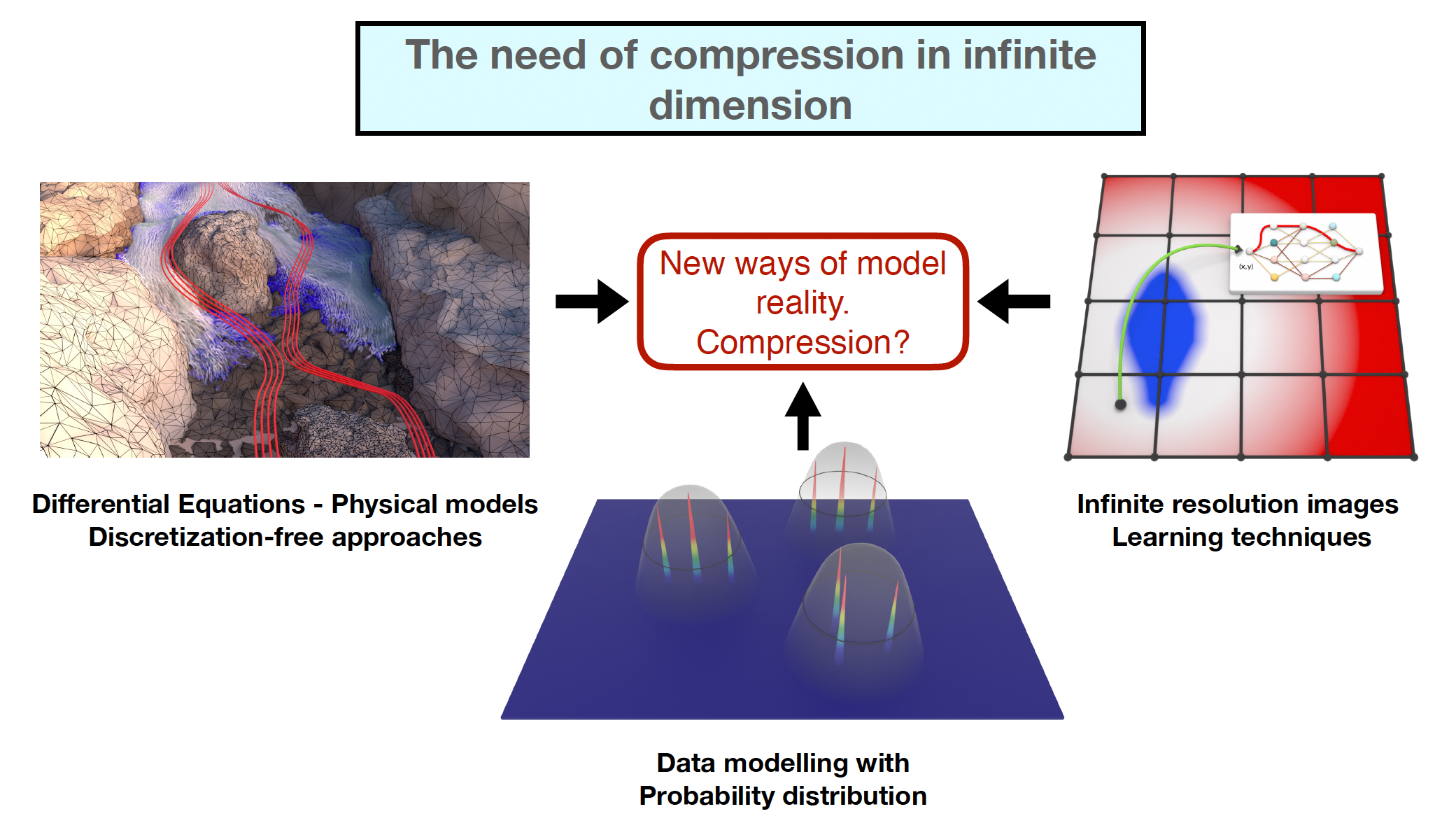

It is becoming clear that our society is overwhelmed by a growing flood of information, much of which is either redundant or unreliable. Even in our daily lives, we constantly face the challenge of filtering content from a variety of media. One way to tackle this challenge is through compression methods, which are already widely applied to finite-dimensional data. However, when it comes to infinite-dimensional structures which are often used to describe modern data, such methods remain unexplored.SPARGO aims to bridge this gap by developing a new framework for infinite-dimensional compression

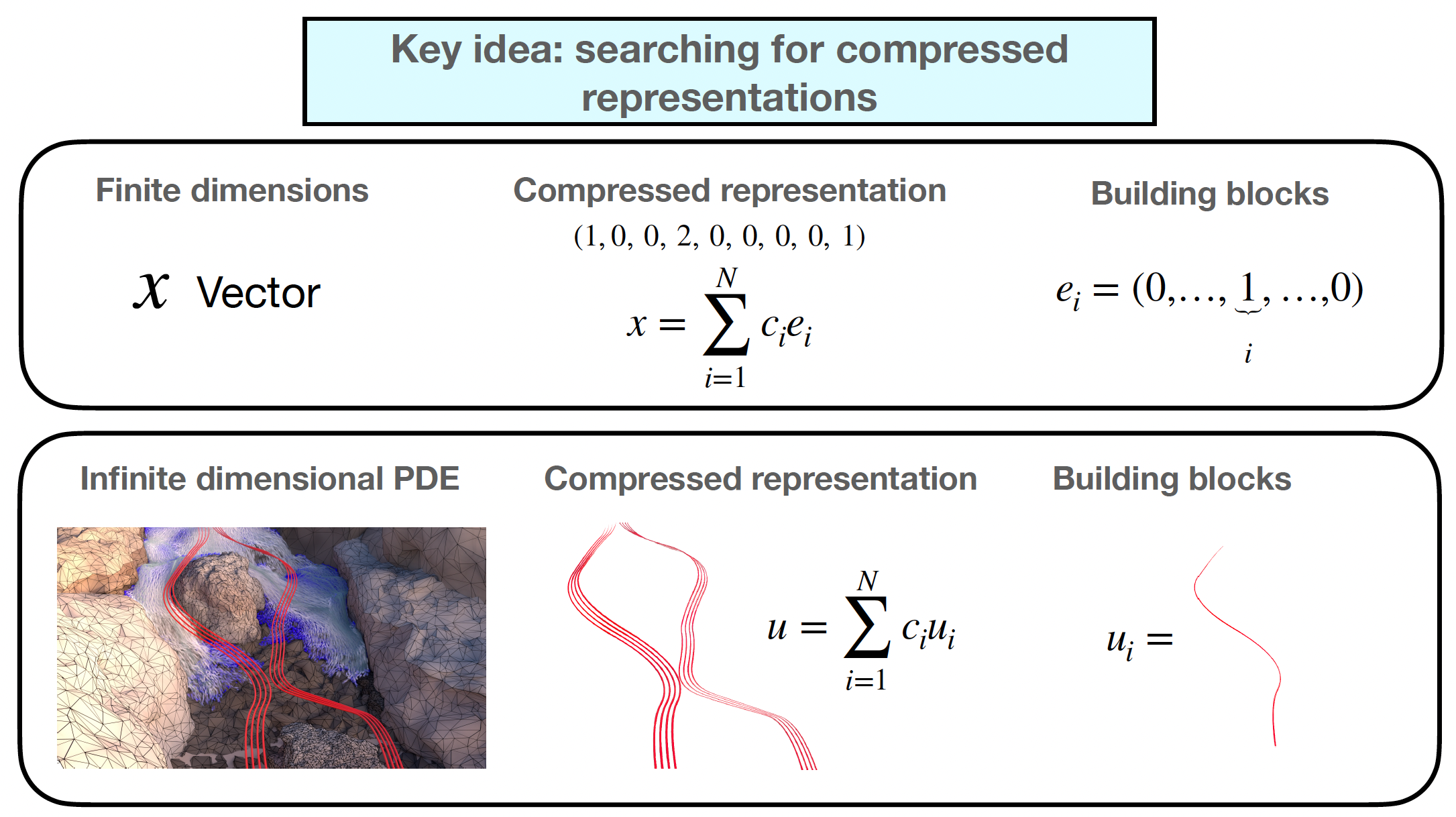

The development of compression and sparse methods in infinite dimension has been limited by the lack of a suitable general notion of sparsity that would provide information on how we should optimally represents infinite-dimensional data. It has been generally accepted that compressing means optimally representing data using a collection of few simple building blocks. However, no general recipe has been given to understand the reason why certain building blocks should be used instead of others. SPARGO advocates that the sparsity features of a given infinite-dimensional model are determined by its geometric properties. It thus aims to characterize building blocks and provide sparse representations by studying the geometric structure of an optimization problem. Such sparse, or compressed, representations will be then instrumental to provide reconstruction guarantees as well as to design compression algorithms that take advantage of optimal sparse representations.

SPARGO aims to achieve the following objectives:

• Sparse stability in infinite dimension. Is infinite-dimensional sparsity preserved under perturbations of the optimization problem? SPARGO addresses this question by investigating how the ability to solve an optimization problem using only few building blocks is stable with respect to various types of perturbations.

• Analysis of Atomic Gradient Descents approaches. How can we use the sparse structure of infinite-dimensional optimization problems to design algorithms? The characterization of the sparse building blocks of a given optimization problem is instrumental to design algorithms that use such structure in the iterate. SPARGO introduces a new class of infinite-dimensional sparse optimization algorithms named Atomic Gradient Descent methods that is built on such principle.

Bibliography:

• Kristian Bredies, Marcello Carioni. Sparsity of solutions for variational inverse problems with finite-dimensional data. Calculus of Variations and Partial Differential Equations (2020)

• Marcello Carioni, Leonardo Del Grande. A general theory for exact sparse representation recovery in convex optimization. (2023)

• Marcello Carioni, José Iglesias, Daniel Walter. Extremal points and sparse optimization for generalized Kantorovich–Rubinstein norms. Foundations of Computational Mathematics (2024)

• Kristian Bredies, Marcello Carioni, Silvio Fanzon, Daniel Walter. Asymptotic linear convergence of fully-corrective generalized conditional gradient methods. Mathematical Programming (2025)

• Kristian Bredies, Marcello Carioni, Silvio Fanzon, Francisco Romero. A generalized conditional gradient method for dynamic inverse problems with optimal transport regularization. Foundations of Computational Mathematics (2024)

• Francesca Bartolucci, Marcello Carioni, José Iglesias, Yury Korolev, Emanuele Naldi, Stefano Vigogna. A Lipschitz spaces view of infinitely wide shallow neural networks. SIAM Mathematical Analysis (2024)